- Home

- About

- RSVP

- Contact

- Blog

- Download Freehand Mx 11.0.2 (free For Mac Fixed

- //TOP\\ New Speedo Boys, 01 @iMGSRC.RU

- Bouncin Back Mystikal Download maolmtal

- _star_wars_empire_strikes_back_free ((EXCLUSIVE))

- ((INSTALL)) Download Driver Modem Advan Dt-10

- File-Upload.net - KSuite3_37_KessV2_50017_setup.rar wardljai

- 18+ Call Center Part 1 2020 S01 ULLU Originals Hindi Complete Web Series 720p HD |LINK|

- Httpmiggsb.comCollective-Exhit.html __EXCLUSIVE__

- PDisk - KGF Chapter 1 (2018) Telugu Full Movie belldarry

- Shirdi Sai Baba Chalisa In Telugu Pdf Free 33 janinas

- Vnc_viewer_serial_key_2020 Free_

- Adobe Premiere Pro CC 2018 V12.0.1.69 X64 Patch March Updated

- Garfield_scary_scavenger_hunt_2_game_free_ carmimari

- Happy_feet_soundtrack_ EXCLUSIVE

- Flexisign 8.1 Software Free haidebanna

- [CRACKED] Raaz 2002 Full Hindi Movie Download

- [BEST] The Amazing Sara, 10yo Tiny Italian Goddess, 20201217_141534 @iMGSRC.RU

- Girls Skirts N Dresses 04., Skirts (116) @iMGSRC.RU

- David Bowie Nassau Coliseum 1976 Rar sadraphi

- Atlas Ti 7 Crack Serial 38 __LINK__

- Stepping Stones Violin Download philishe

- Girls Mixed 21, Se 4457 (3) @iMGSRC.RU valelyndy

- File-Upload.net - Sturm18-EinMenschwieDuBonus2009.zip

- Mersal Download Torrent narrewaino

- Tony! Toni! Tone! (Sons Of Soul (1993).rar ((INSTALL))

- !!LINK!! Indian-economy-ramesh-singh-pdf-free

- Un Uomo Tranquillo maklan

- Jawga Boyz Hick Hop 101 Torrent

- Fallout New Vegas Ultimate Edition V1 4-I KnoW

- ((FREE)) LEICA.GEO.OFFICE.COMBINED.V1.0.ISO-RiSE.rar

- Tomb_raider_unfinished_business_ clemehalt

- Milestone Xprotect Enterprise 7 Crack brodejakyn

- NEW! Matrix Reloaded Movie Download In Italian 720p Download

- [PORTABLE] Australia Vs Argentina Live Stream Online Link 2

- 1971 Movie Download Kickass 720p Torrent renauvaldi

- __HOT__ M-Phazes - The Vault Drum Library Hit

- Ap Physics College Board Workbook Answers fauansl

- VIPBox Arkansas State Vs Memphis Streaming Online Link 3 //TOP\\

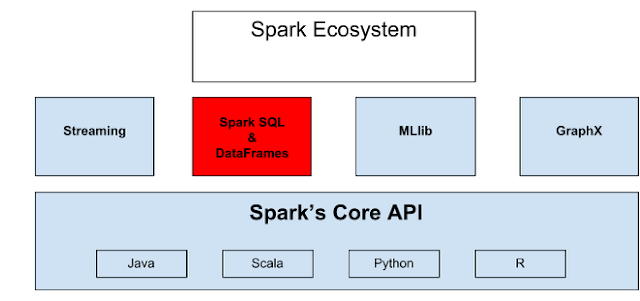

- Pyspark-write-json-to-hdfs

- ((NEW)) Motorola Talkabout T6400wx Manual

- Amc 10 2020 Cutoff |VERIFIED|

- ~REPACK~ Economia Y Contabilidad Angrisani Lopez Pdf 19

- Download-gg4 Rar __EXCLUSIVE__

- Retro2, IMG_20201121_060606_525 @iMGSRC.RU

- Girls In Bikinis, 1F0460ED-E8AD-40F7-812F-D7C41A91 @iMGSRC.RU __FULL__